3D Scanning for Surface Information

Scanned Specular?

A lot of people hear and read about various ways to extract 'Specular' and 'Roughness' maps directly from photogrammetry scans using Cross and Parallel polarization, however in reality its not that simple.

When we talk about extracting this kind of data, what we are actually doing is extracting surface Information, its not explicitly a specular or roughness map, but more so information derived from the specular response of that surface in question.

this information can contain a whole bunch of unknowns, so it is important to understand what may be contained within this data you capture.

Extraction of surface Information

The way a polarizer works is it cuts off all light on a particular axis, so if you emit light through a polarizer it will be limited to ONE axis.

So by blocking any returning light that bounces off a surface using a polarizer oriented either with, or 90 degrees off from the lights polarizer we can get one of two effects.

I'm using albedo and specular to describe the results here, but its fairly simple.

We are assuming a '100%' is AFTER the first polarizer, so the light that hits the surface that we are capturing.

Cross polarized image = 50% albedo, 0% specular

Parallel polarized image = 50% albedo 100% specular

If you were to combine the two you get a full 100%/100% of both channels, HOWEVER if we were to subtract our cross polarized image from our parallel image, we are left with 0% albedo and 100% specular

This is an issue though as our baseline exposure is actually at 50%, so we need to halve our specular image to bring it in line with our albedo 0% albedo. 50% specular

Now if we look at this we can see that it not only contains the information about the specular response, but also has all sorts of other data within it, muddying the information we can extract.

Looking at that vase we can see that it contains the following

- specular Information

- Color information

- Surface roughness information

- Several strong highlights from errors during capture

- Inconsistent coverage of light

So while this may actually work for some uses out of the box, to get something reasionable we will first need to run a bunch of filters to extract maps to then use as bases to build our spec/rough/gloss/metallic

that leads me onto a slight tangent

Metallic maps from (polarized) scans

Scanned surfaces are inheriently NOT meant for the metallic workflow

The way metallness works is the complete opposite of how a metal object is scanned, in metalness we want our metal surface to have all of the color and intensity information within the albedo, however a polarized scan is the opposite, the intensity is removed and only the base color is left.

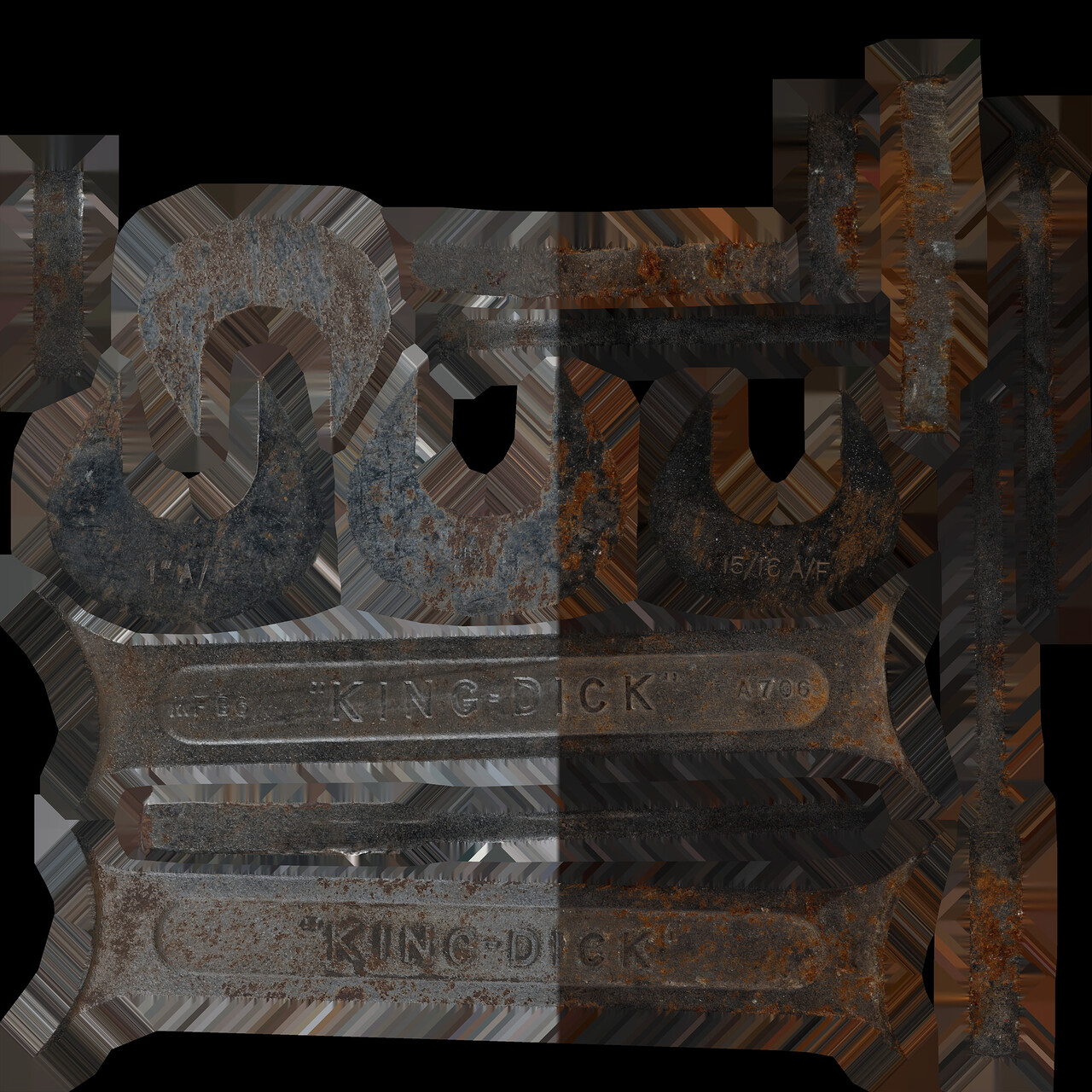

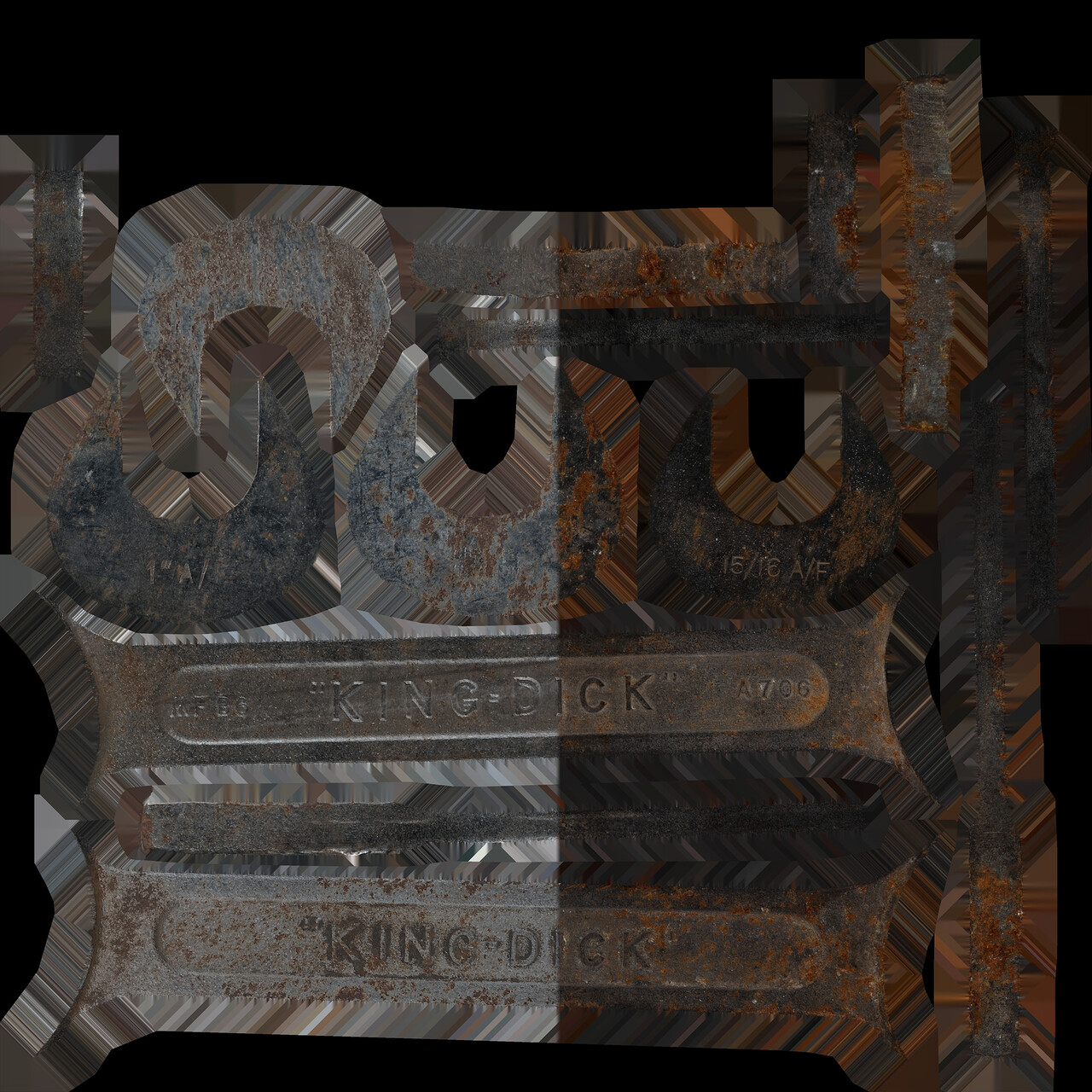

Look at this scan of a chrome spanner, you can see the albedo on the right, and how its dark grey to almost black in most places, it also intermingles with rust and other imperfections.

Getting this to look good with metalness will be a royal pain in the arse without a hand painted mask to bring it up to a expected level for chrome in a metalness workflow

End tangent

How do we use it?

Using these extracted maps is completely subjective, Its just data after all.

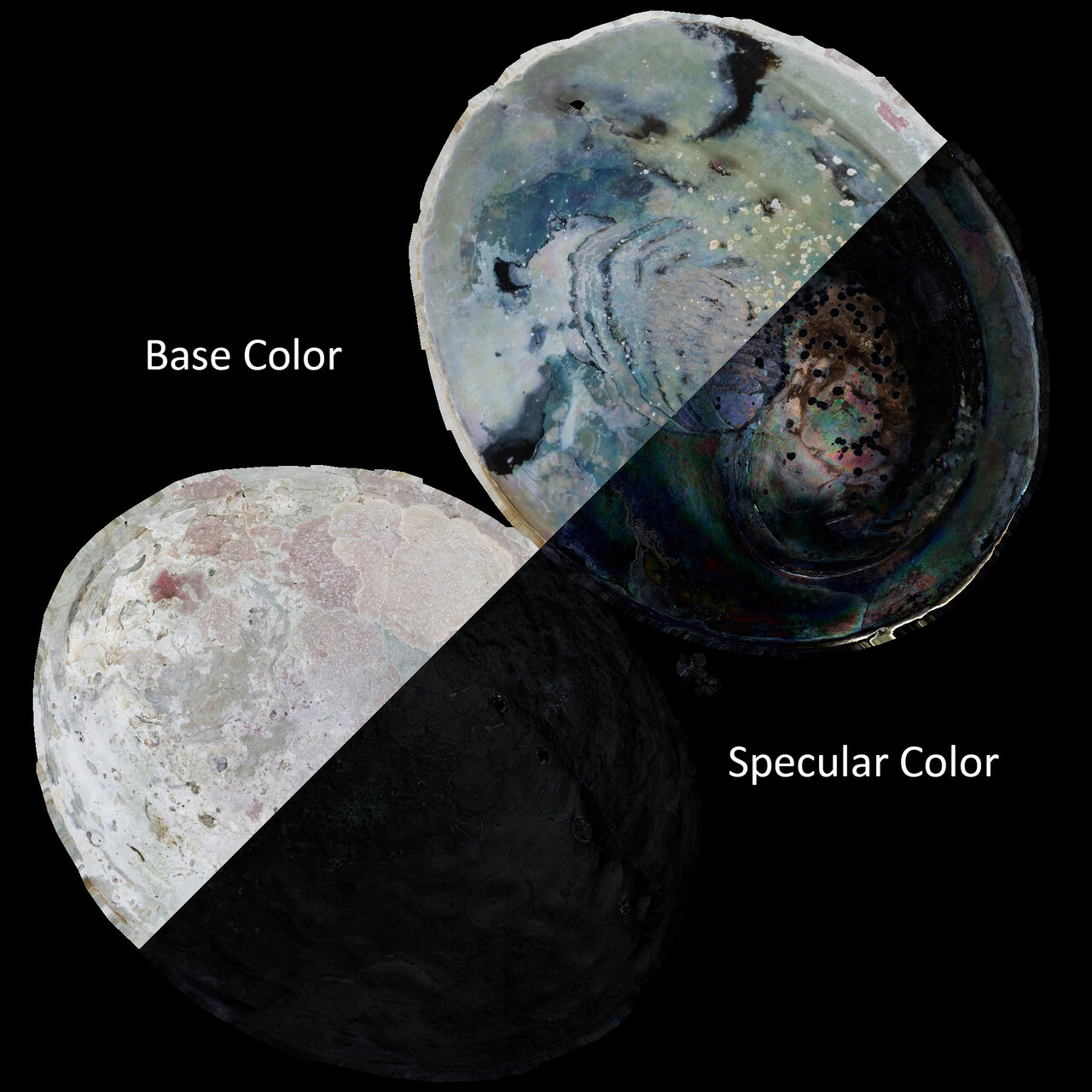

looking at the vase you can see just how much data is lost if we just use the cross polarized texture, however the parallel polarized texture contains a significant amount of information

And its useful data, it tells us what areas have a strong specular response, it can even give us specular color information.

For instance I used this data as the specular color map on my paua shell scan I did several years ago,

While its not perfect, it did look very good and produced a convincing pearlessence effect

Issues

there are a number of issues when using workflows like this to extract more information

For instance on the spanner I shot last year, The number of parallel polarized reference images I took should of been significantly increased as the response changed with every image, and I ended up with quite a few areas with hot spots or just completely lacking the information i needed.

If you look closely you can see that there are some ares which are darker than others, even though this is a fairly uniform chrome surface.

you can also see just how much the rust changes color under normal lighting conditions.

These issues can be resolved, but how much time would it take? how useful would that be in the end?

So I guess in conclusion, be careful with how you use the data, but its useful.

its also damn annoying to get, and 99% of the time you can go without it at all.